Injection Testing: Approach, Tooling & Risk

BixeLab / Biometix — Injection Testing Overview

Objectives

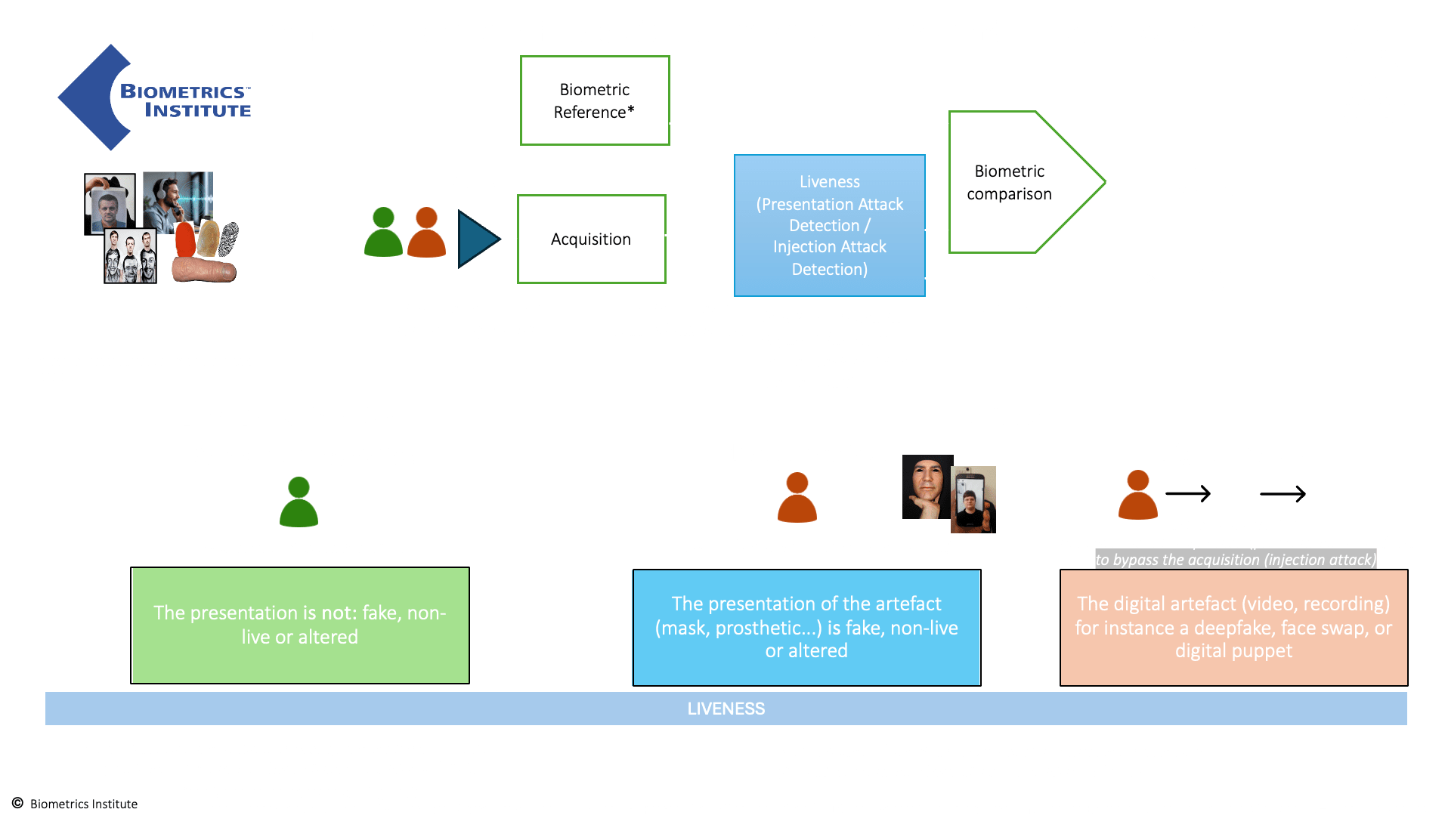

- Validate whether injected artefacts (images / video / requests) reach the capture pipeline.

- Measure detection robustness across client (app) and server layers.

- Produce actionable mitigations to reduce successful injection risk.

Two Workflows

|

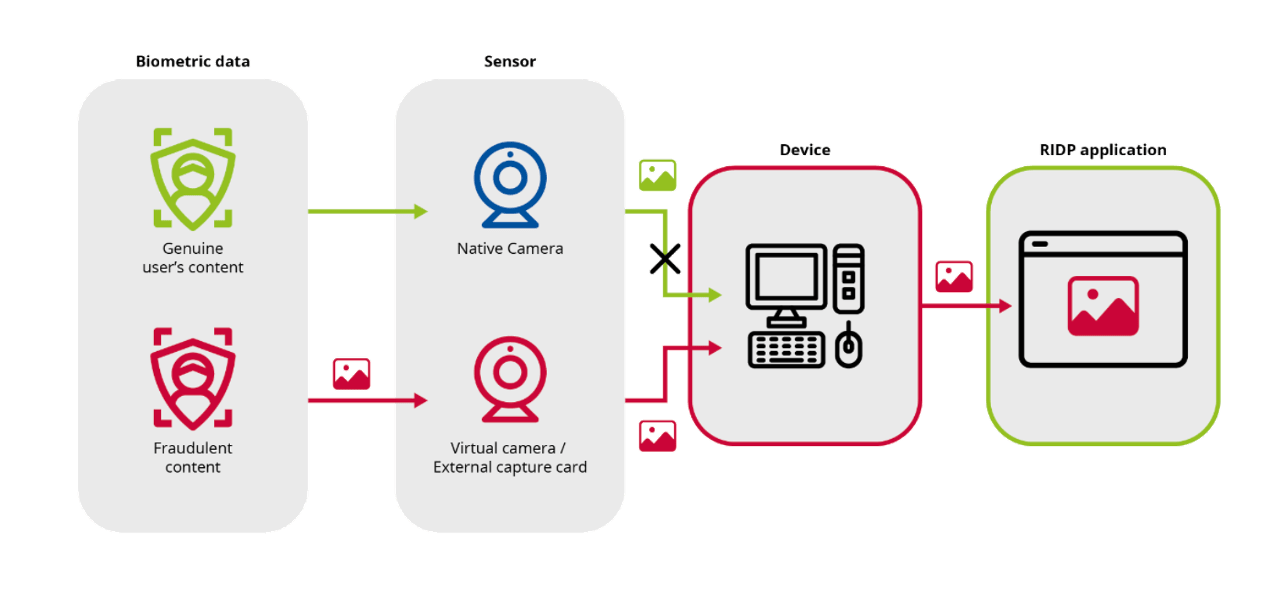

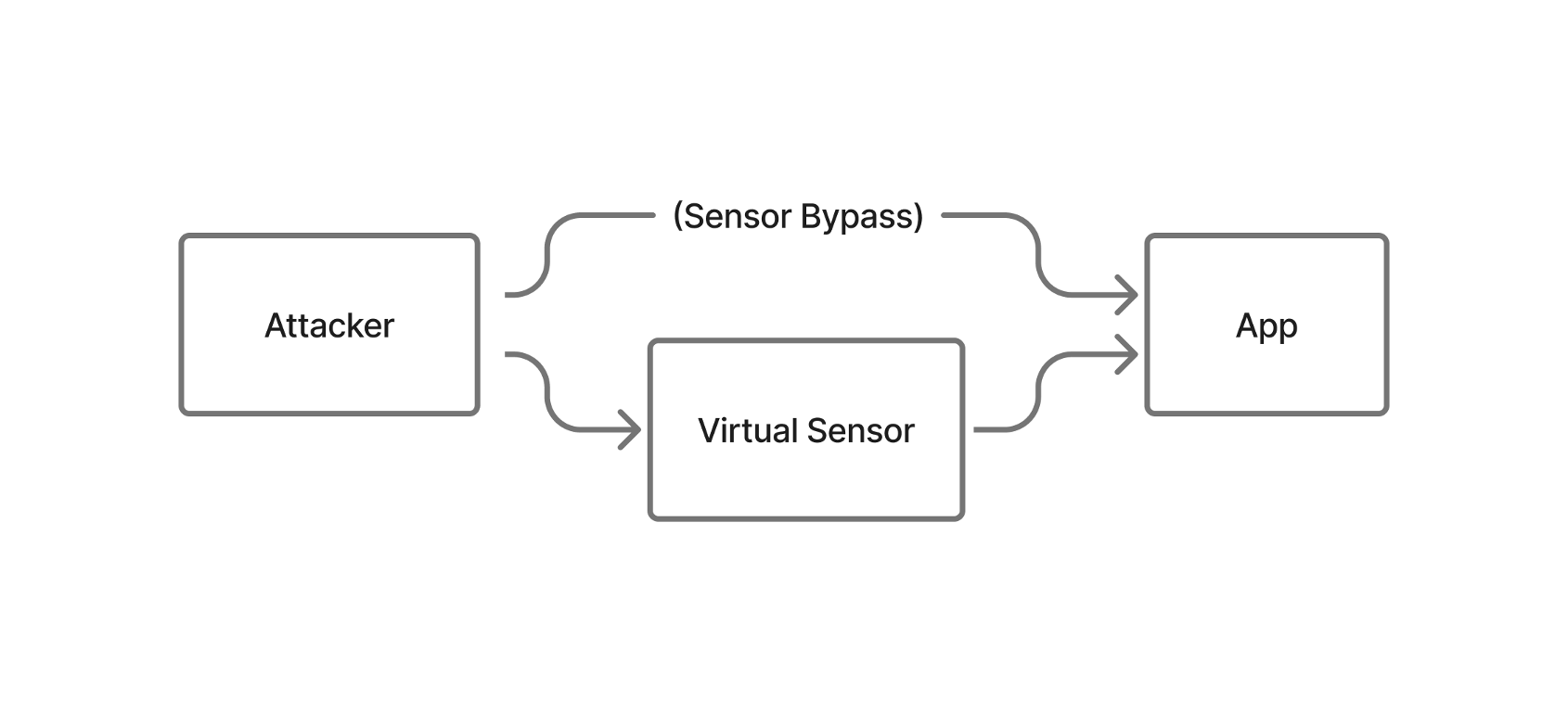

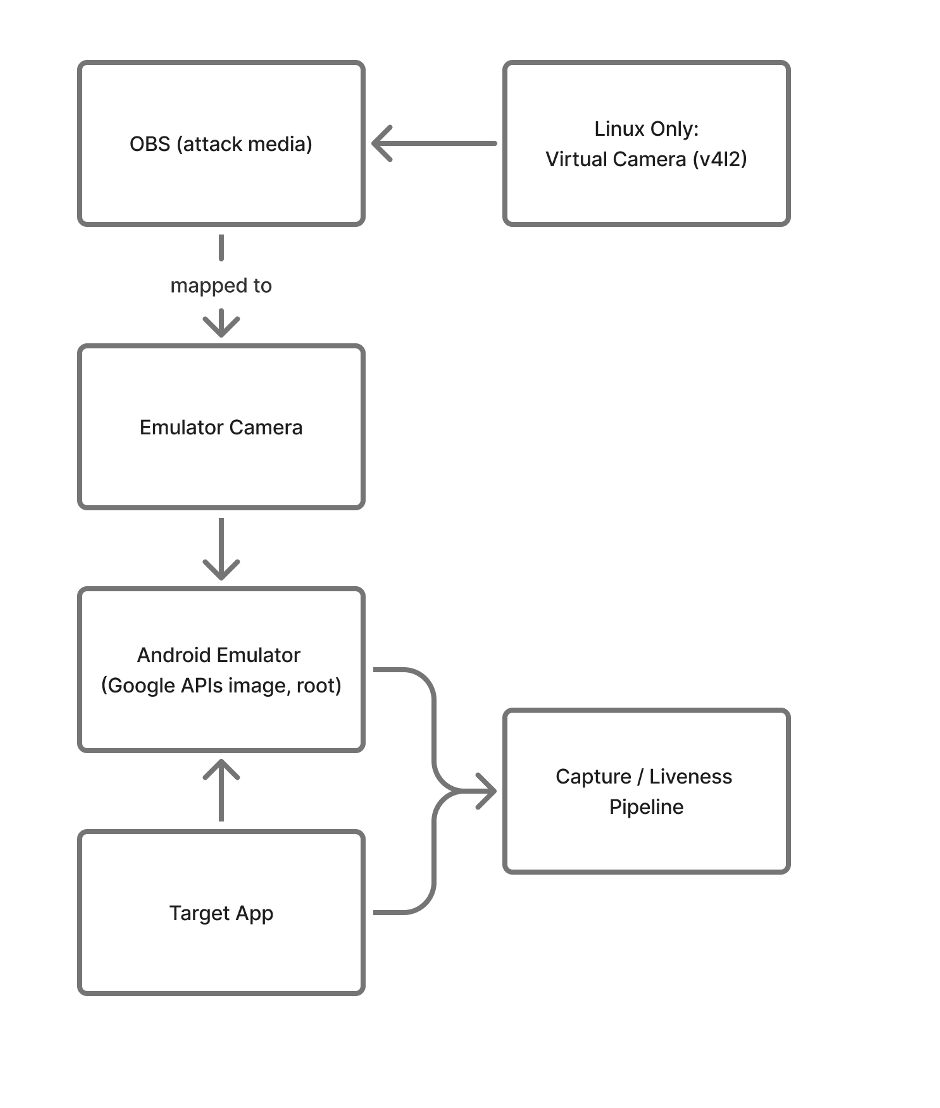

Workflow A: Virtual Sensor

|

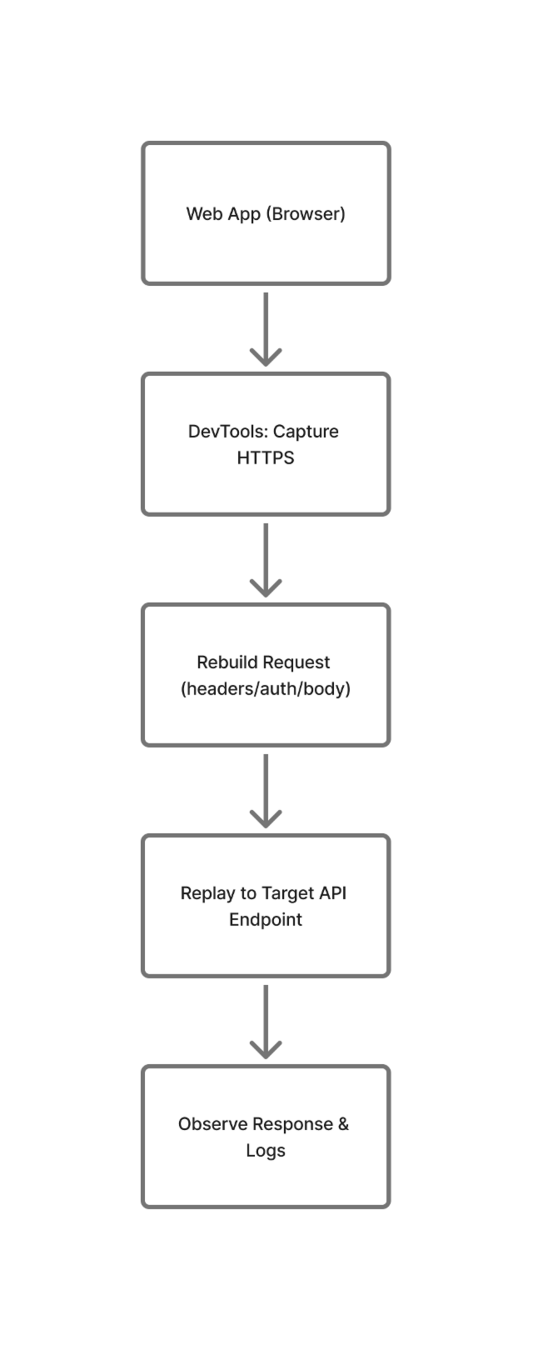

Workflow B:

|

Methods & Test Surface

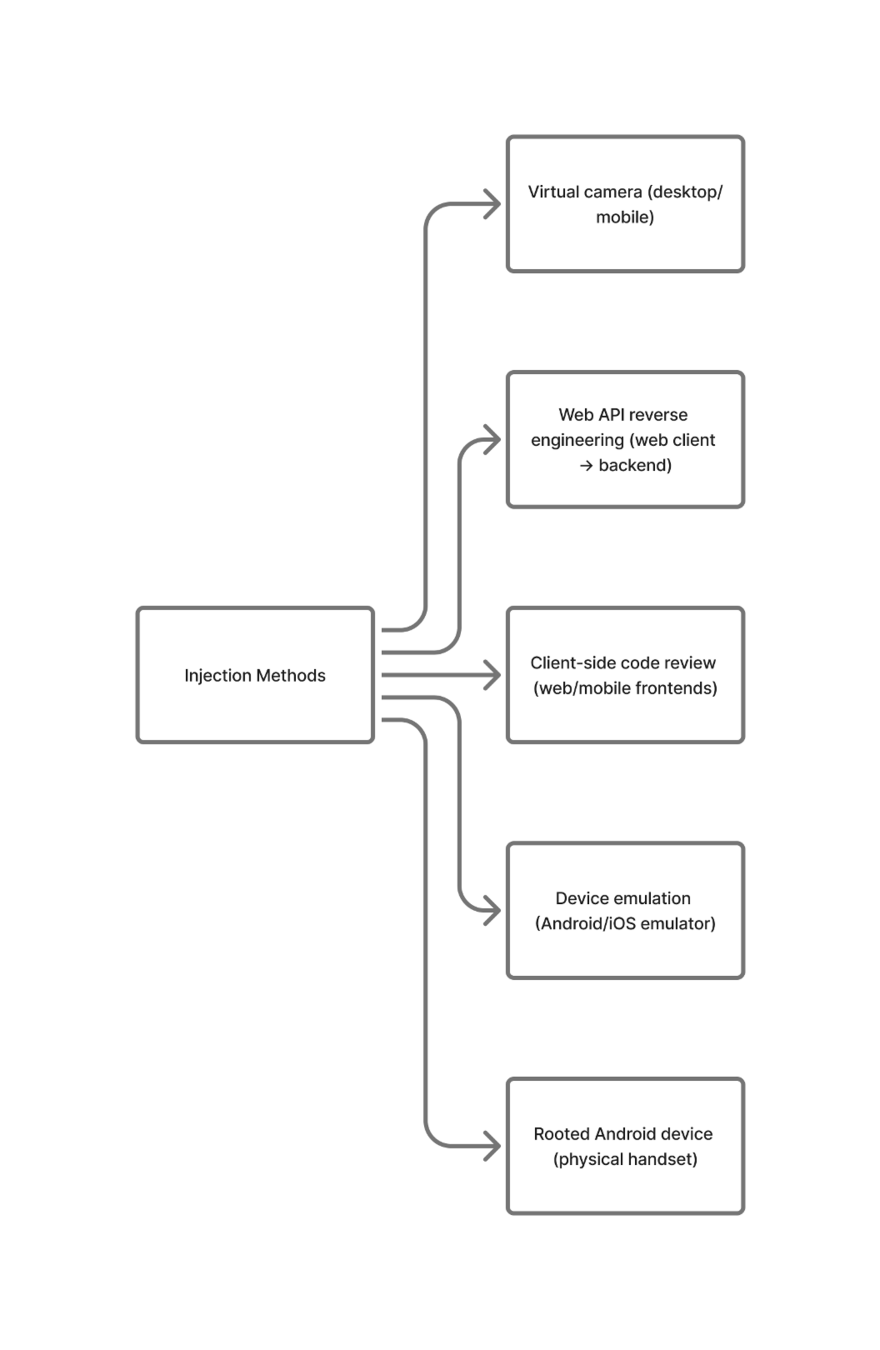

- Device emulation (rooted Android emulators / Google APIs images).

- Rooted physical devices — for low-level control & anti-emulation bypass.

- Virtual camera (OBS → v4l2 / host webcam mapping) to feed crafted video.

- Web API reverse-engineering & replay — capture → reconstruct → replay (with appropriate auth/headers).

- Client-side code review (web + mobile frontends) to identify validation gaps.

Test Volume — Standardisation & Example

Standard run-size = 300 attack transactions per IAM

Formula: 5 transactions × number of IAI species × number of subjects

Example: 5 × 4 IAI × 15 subjects = 300 transactions

Rationale: repeatability, statistical confidence and coverage across IAI species & subjects.

Tooling: Emulator + Virtual Camera (step list)

- Use Google APIs emulator image (rooted) and install target app.

- Map emulator camera to host webcam or virtual device (v4l2 on Linux).

- Use OBS to stream prepared attack media into virtual camera.

- Launch app and drive the IAI sequences.

API Replay: capture HTTPS in browser dev tools → recreate request structure → replay to endpoint (respect auth/headers and crypto where possible).

Challenges & Limitations

- Emulator detection — apps may refuse or degrade functionality.

- App protections — signature/integrity checks can block modified packages.

- Encrypted/signed payloads — replay may fail without correct crypto/keys.

- Virtual camera detection — anti-virtual-device checks or metadata validation.

- System security controls & timing — OS-level protections and runtime timing cause failures.

Implication: use hybrid approach (emulator + rooted devices), document unavoidable gaps and residual risk.

Reporting, Metrics

Reporting & Metrics

- Per-attempt evidence (video frames, request captures, logs).

- Compute APCER (Attack Presentation Classification Error Rate) & BPCER (Bona Fide Presentation Classification Error Rate).

- Per-IAM results, reproducible artifacts, confidence intervals.

Mitigations

Risk Insights & Mitigations

- Device binding & attestation (hardware IDs, secure elements).

- Camera-property checks (sensor metadata, rolling-shutter / noise fingerprints).

- Loop/static & playback detection (motion, physiological signals).

- Strengthen client integrity (code signing, runtime attestation) and server validation (schema + crypto checks).

- Recommend red-team follow-up, regression tests and continuous monitoring.

Suggested Outcomes

Deliverables

- Full test log + per-attempt evidence.

- APCER/BPCER calculations and confidence ranges.

- Prioritised remediation roadmap.

Running the attacks

- Confirm subject/sample counts & IAI species list.

- Choose platforms (iOS / Android / Web) and device mix (emulator vs real).

- Schedule test window and acceptance criteria.

Standards & Guidance (EU / European / international)

-

CEN/TS 18099 — Biometric data injection attack detection (European technical specification).

https://standards.iteh.ai/catalog/standards/cen/43336798-87a4-49d1-9a0b-4e74c73345a7/cen-ts-18099-2024 -

ENISA — Remote Identity Proofing: Good Practices (covers injection / video injection threats and countermeasures). https://www.enisa.europa.eu/sites/default/files/2024-11/Remote%20ID%20Proofing%20Good%20Practices_en_0.pdf

-

ISO/IEC work on biometric injection attack detection (drafts / work items) — watch ISO CEN collaborations for international alignment. oai_citation

Text

Injection Testing

By Ted Dunstone

Injection Testing

- 118