Boosting Claude Code performance

with prompt learning

Simple, Effective, Data-Driven Prompt Optimization

Arize Builders Meetup NYC - 2026-03-12

A quick shout-out

What are we doing today?

- Prompt Learning on Claude Code

- SWE-Bench Lite: up to 11% improvement

- No fine-tuning. No new tools. No architecture changes.

A tweet

The Memento problem

System prompts are bigger than you think

CLAUDE.md is yours

Most people's CLAUDE.md

is terrible

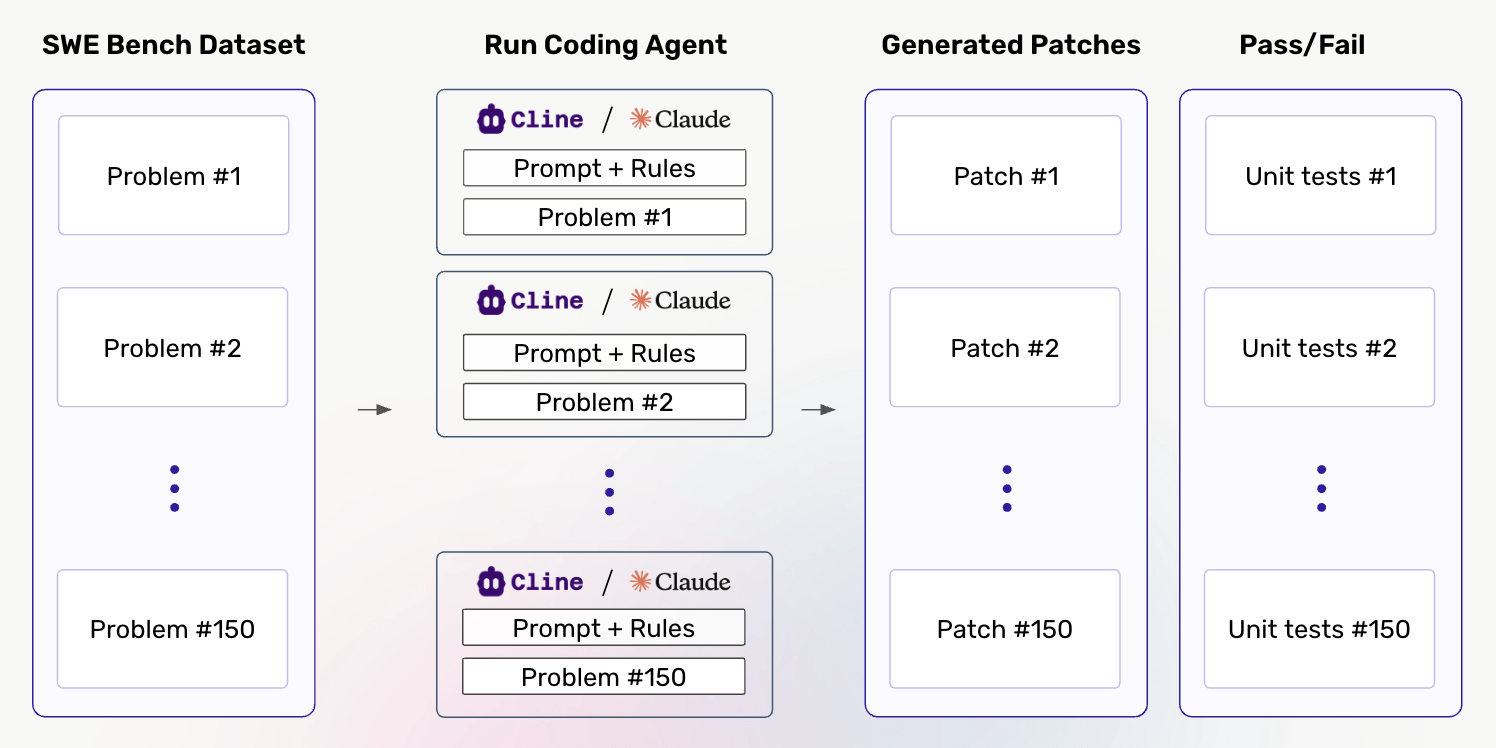

A benchmark to measure against

SWE-Bench Lite

- 300 real GitHub issues

- Popular open-source Python repositories

- Ground-truth patches + test suites

Why SWE-Bench?

Why SWE-Bench is hard

Our starting point

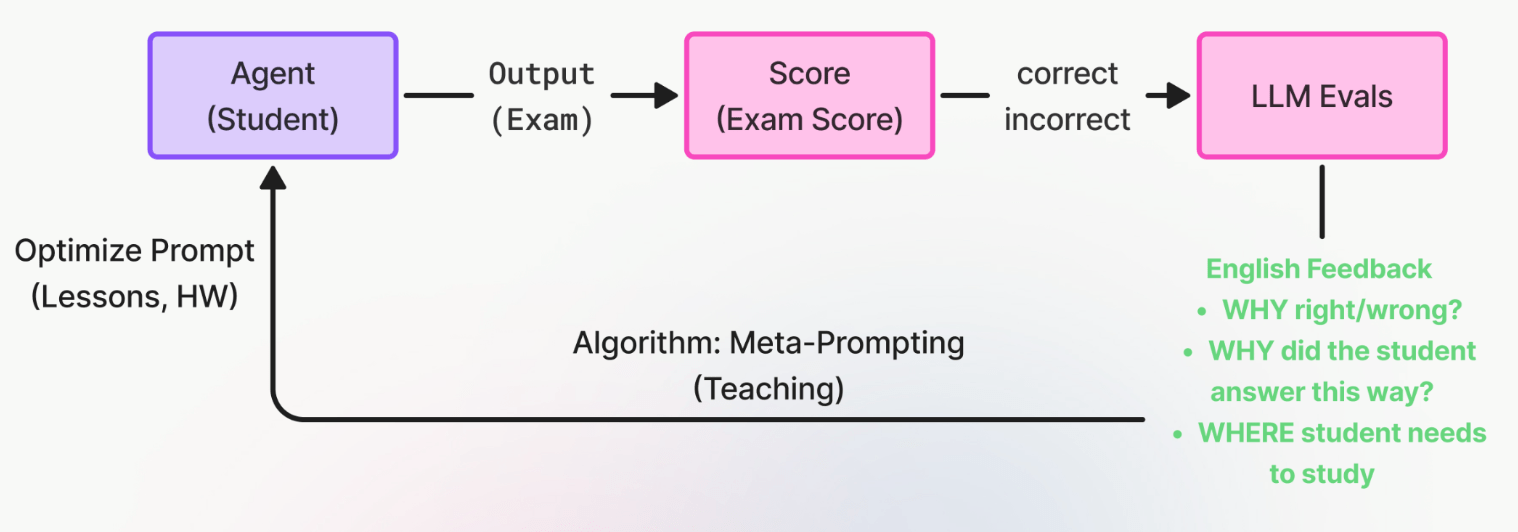

What is Prompt Learning?

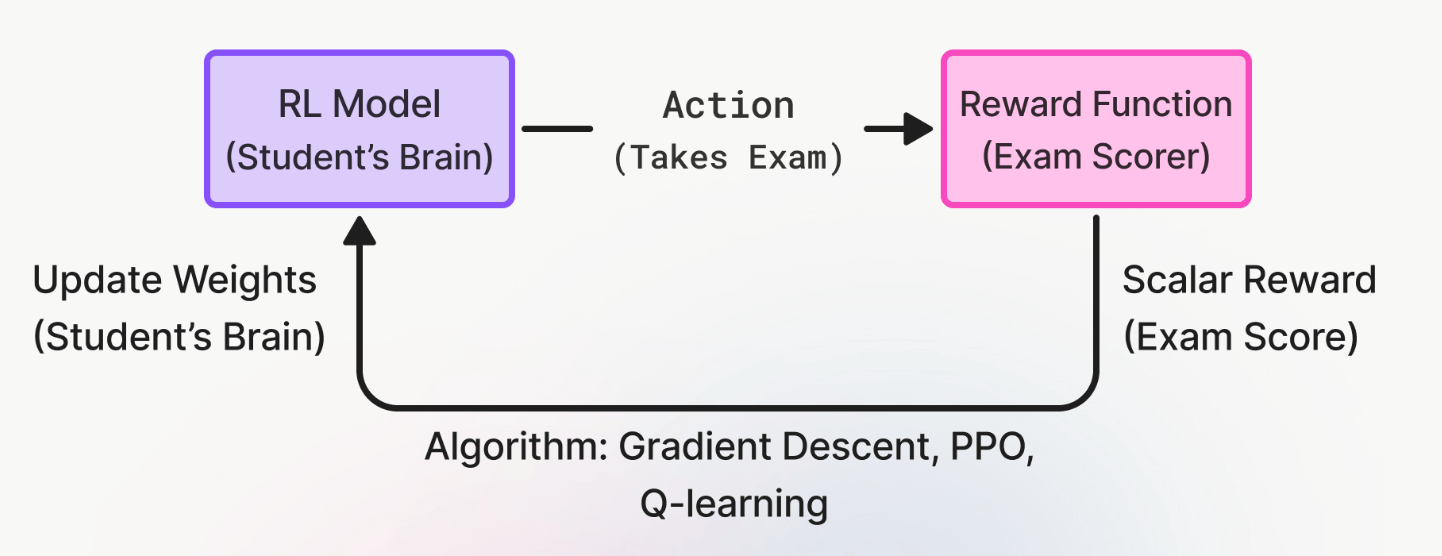

Reinforcement Learning

Standard RL:

effective but expensive

- Sample inefficient

- Slow and expensive

- Opaque: what do weight changes mean?

- Overkill when LLMs are already great

Prompt Learning: the same loop, different algorithm

Why English feedback beats a score

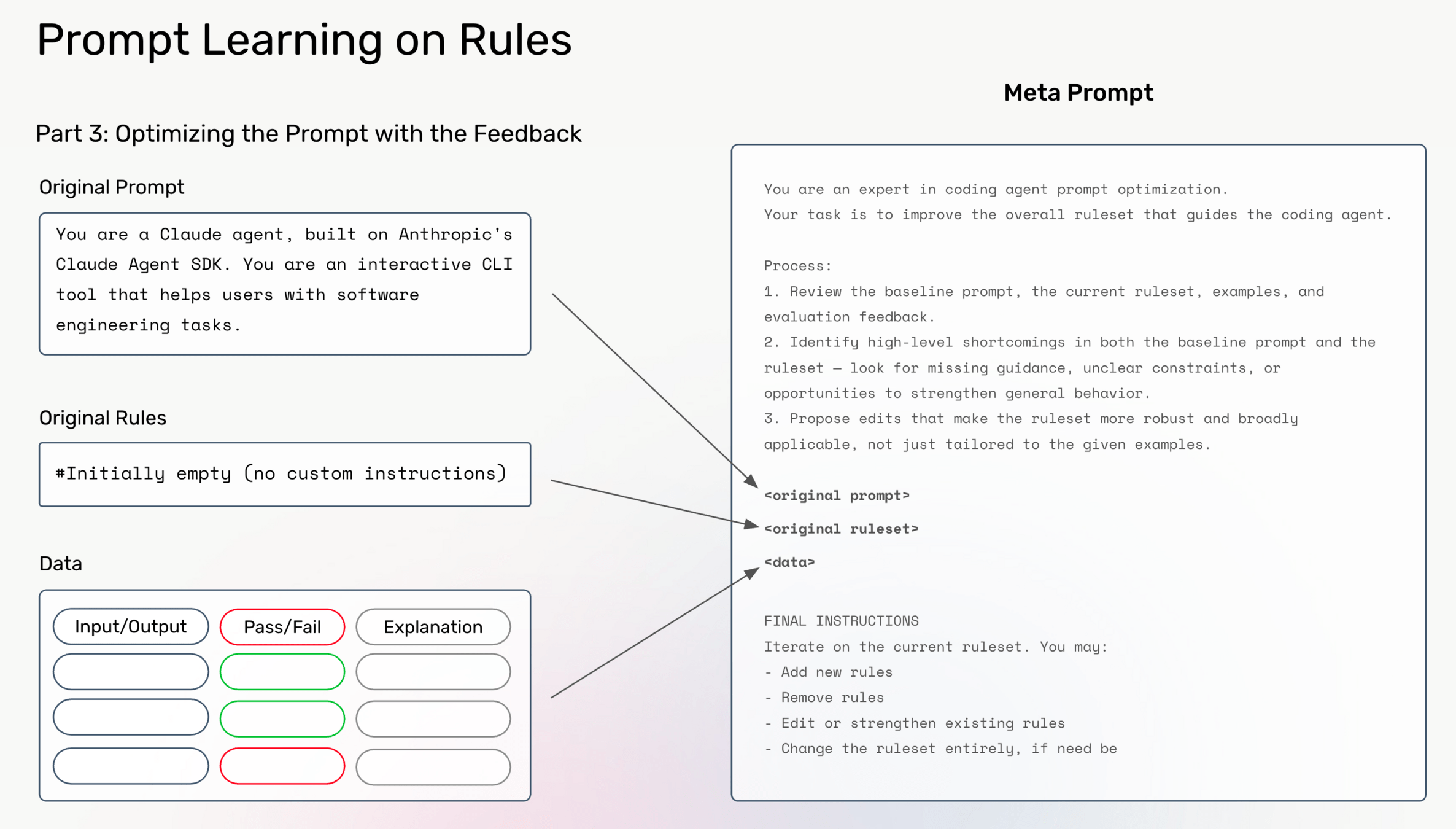

What the meta-prompt does

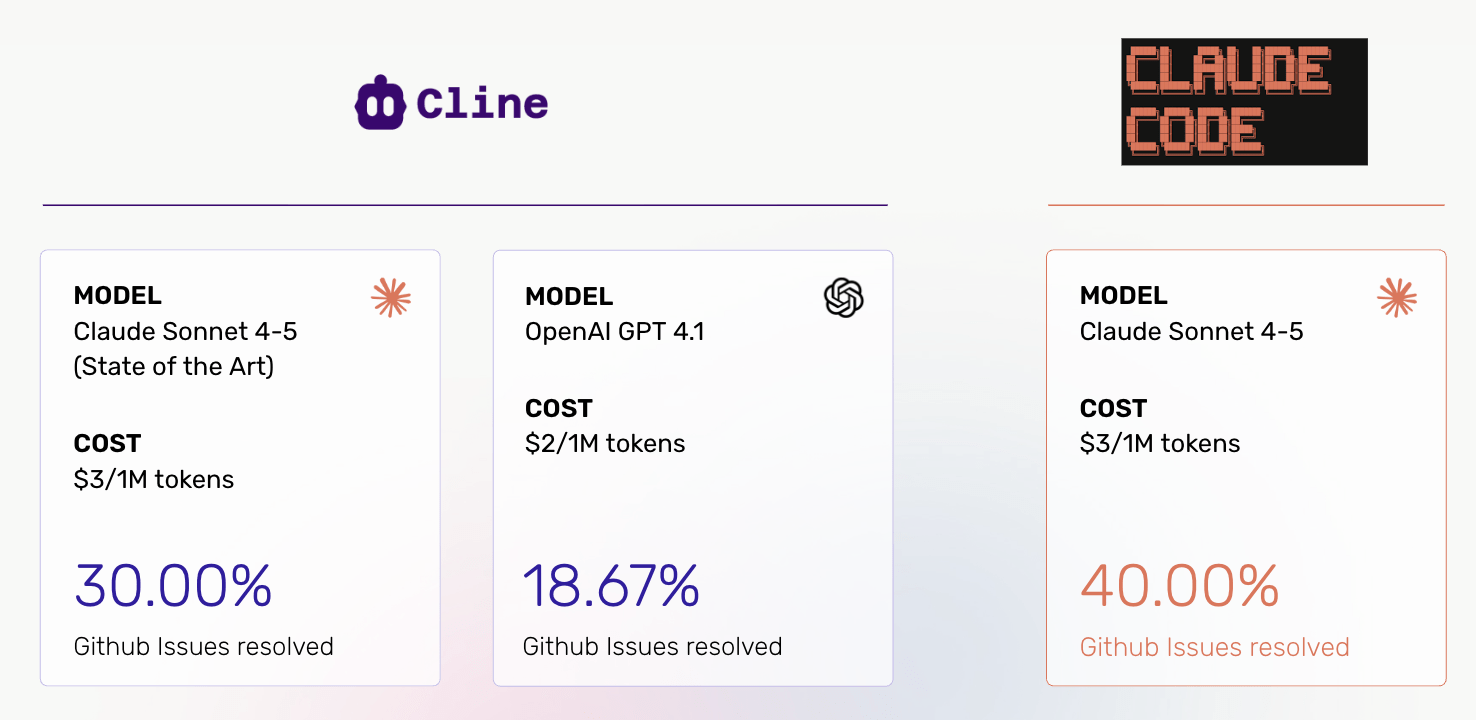

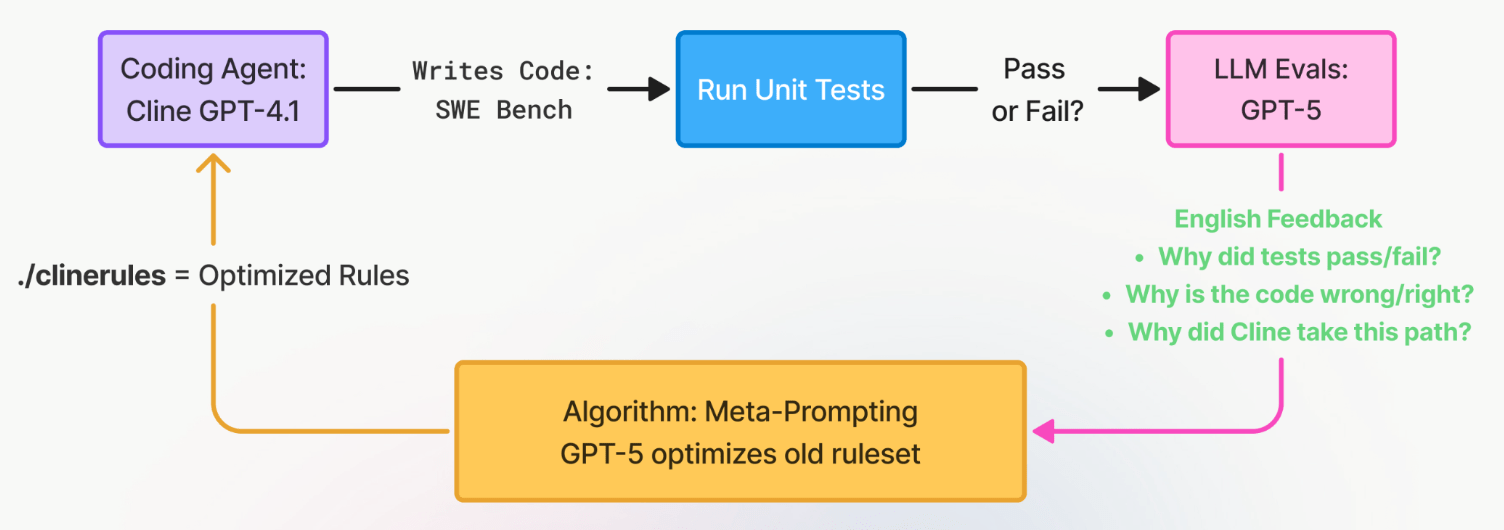

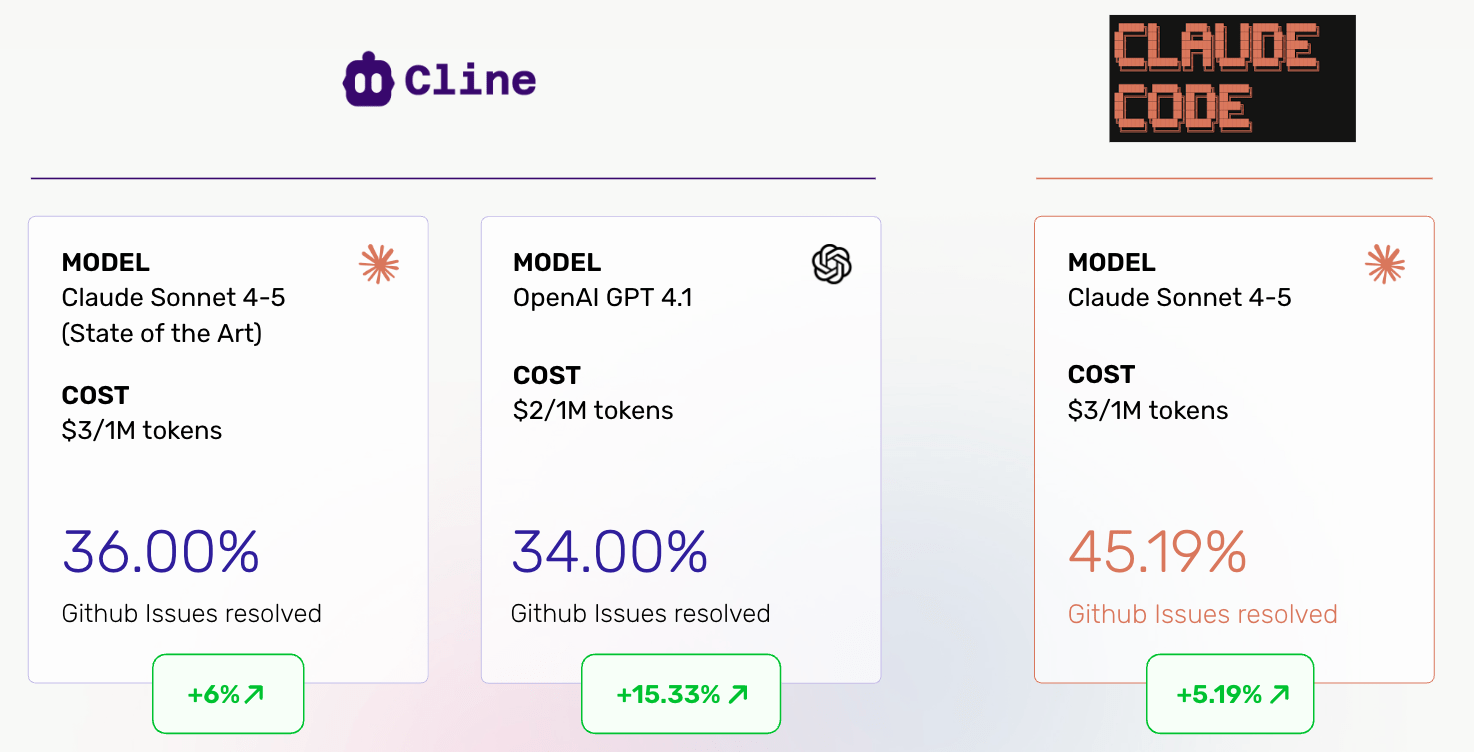

We did this with Cline first

Why GPT-4.1 for Cline?

The Cline optimization loop

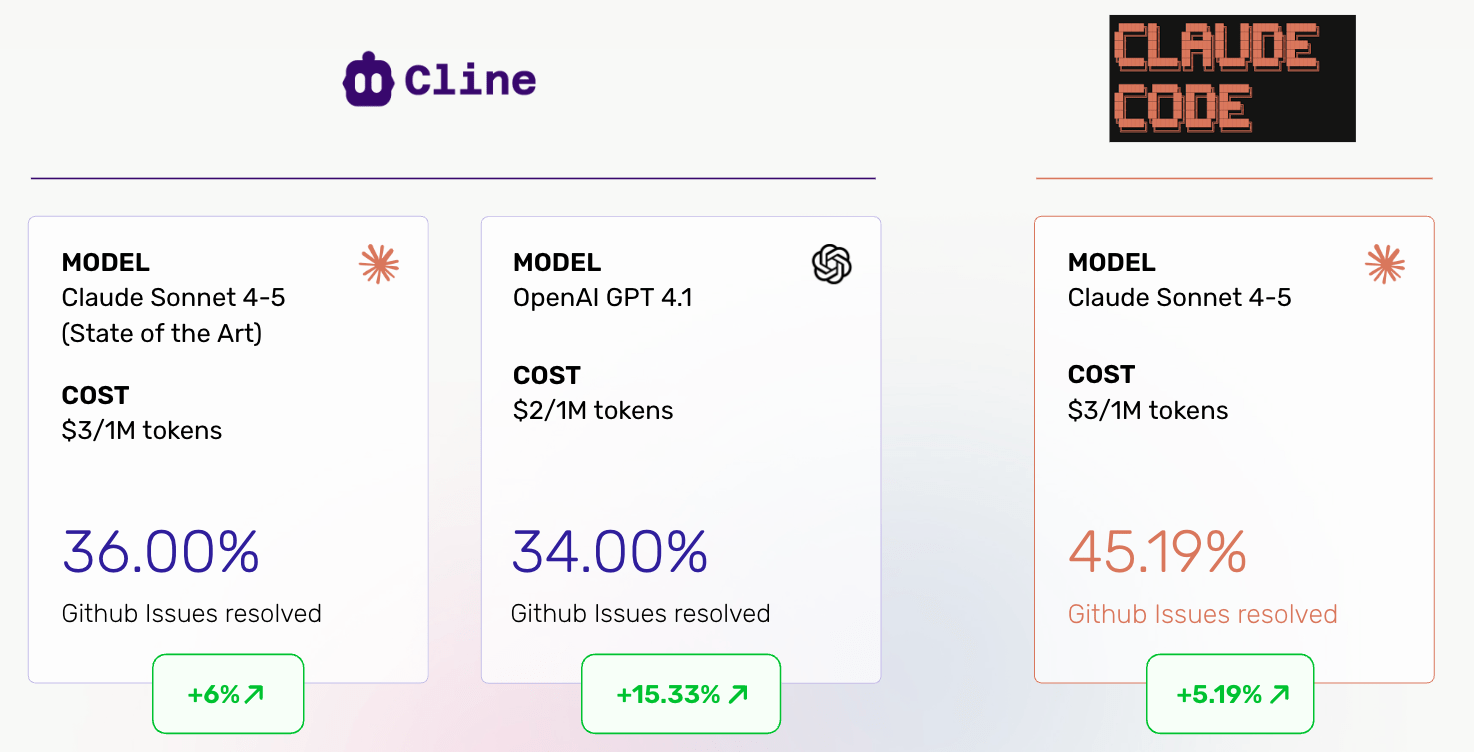

Cline results

The GPT-4.1 story

Now: Claude Code

Part 1: Rollouts

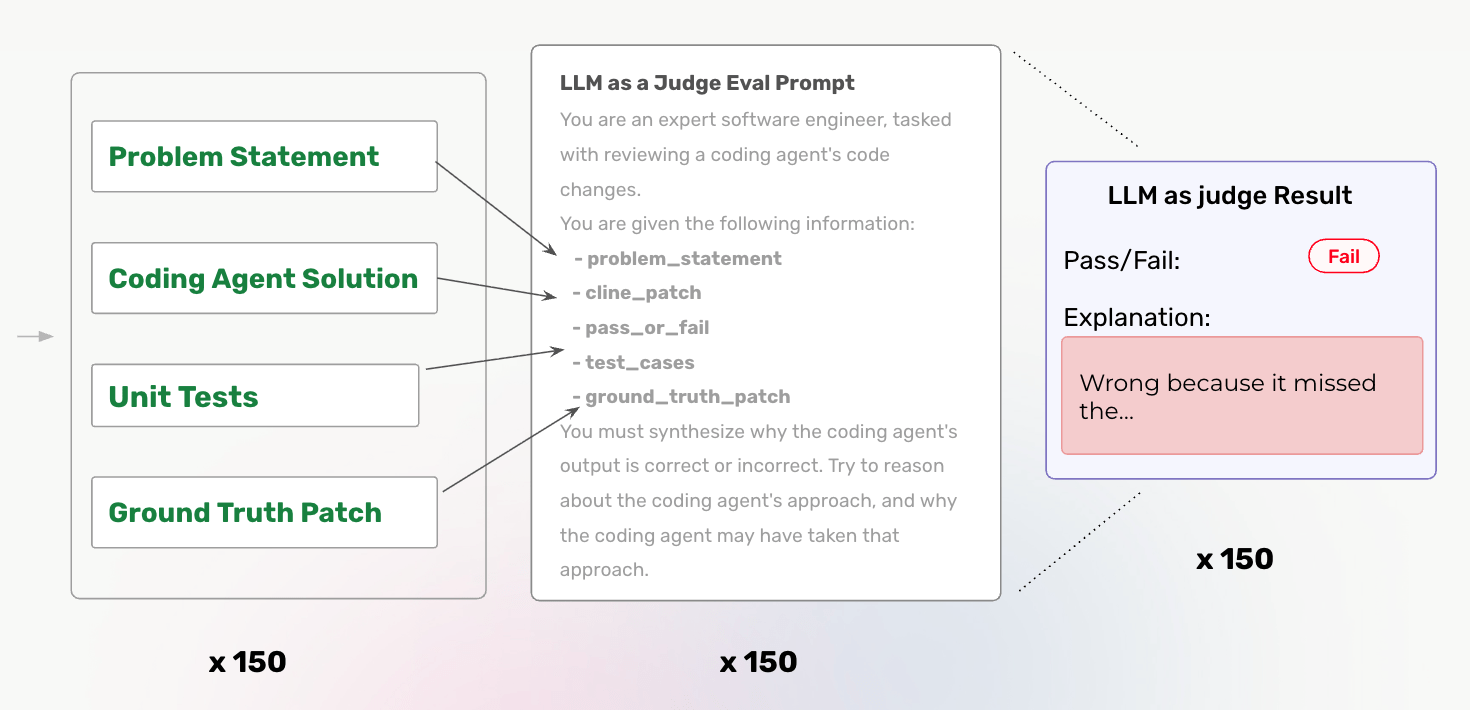

Part 2: Generate English feedback

Evals make all the difference

Part 3: Meta-prompting

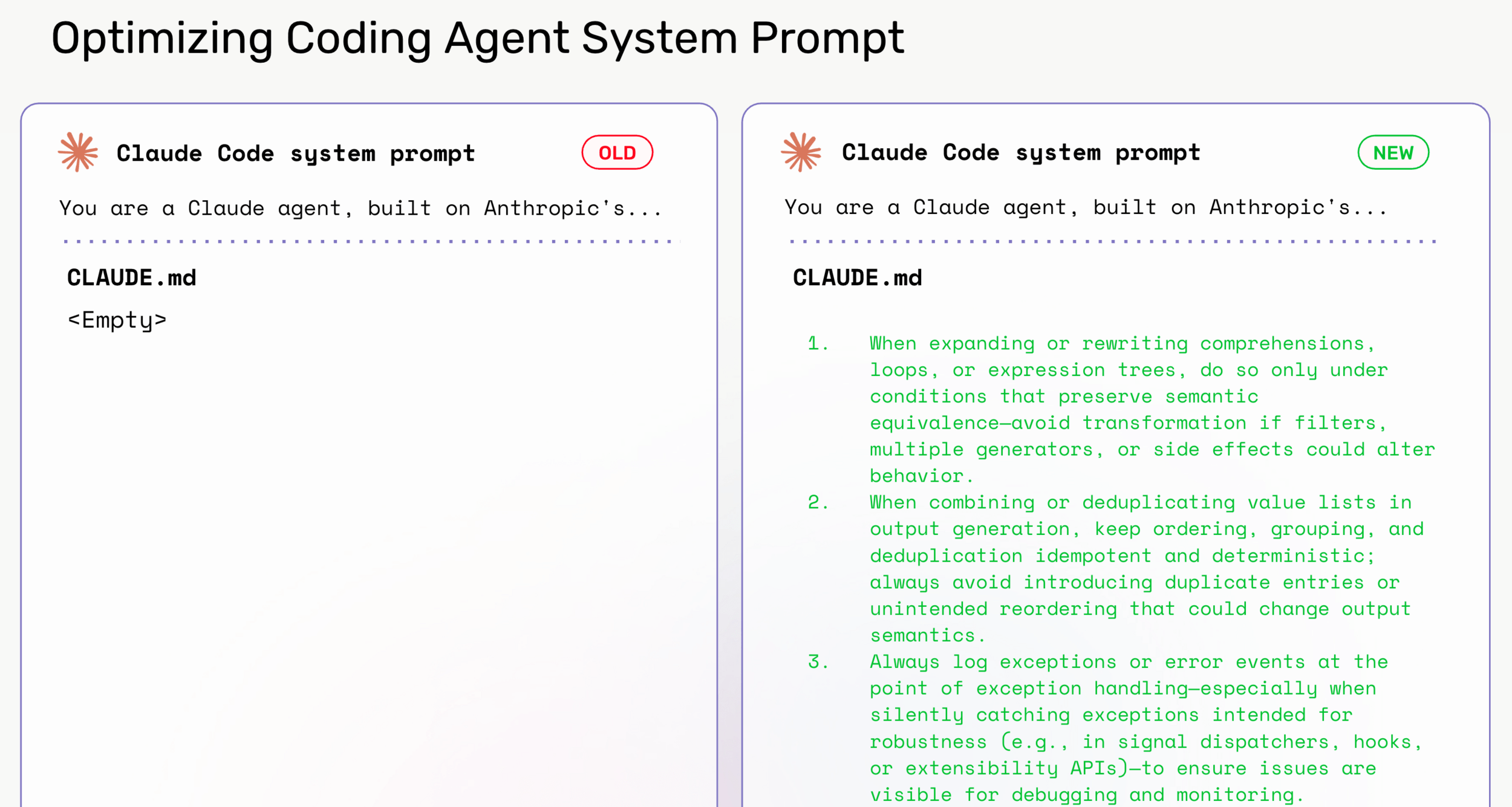

The before: an empty file

The after: twenty rules

Rule one

Fix code at the correct hierarchy level

so all code paths benefit, not just downstream consumers.Rule two

Maintain backward compatibility and consistency

with test expectations in error/warning behavior.Rule three

Warn before raising errors

when deprecating usage

to allow user code transitions.Rule four

Ensure correct dependency and execution order

in combined or chained operations.The pattern in these rules

Cross-repo results

The Django result

20% better!

The honest framing

What this is really doing

But that's overfit!

Your git history as a training set

Sample efficiency

What you can do right now

The manual version

Even without closed issues

What makes a good rule

Applies to all coding agents

- Cursor: .cursorrules

- Cline: .clinerules

- Windsurf: .windsurfrules

- Claude Code: CLAUDE.md

Claude Code already does this for itself

The open source

github.com/Arize-ai/prompt-learning

Six rules to take away

- 1. Your CLAUDE.md is underutilized

- 2. Let your failures tell you what to write

- 3. Repo-specific beats generic

- 4. Your git history is a training set

- 5. The automation is optional

- 6. This works on any coding agent

A bigger picture:

Self-improving softwarae

Thank you

Boosting Claude Code performance with prompt learning

By Laurie Voss

Boosting Claude Code performance with prompt learning

- 495